The Funderbeam Blog

Articles, interviews, case studies announcements and more.

Welcome to The Funderbeam Blog.

VentureWave Leads $40m Investment in Funderbeam to Shape the Future of Venture Markets

VentureWave, an Irish-based venture private equity group committed to impact investing and supporting high-impact entrepreneurs,

In the Hot Seat – Promoty

Thank you for taking our HotSeat, CEO of Promoty, Aleks Koha. How did the year 2022 turn out for your company? 2022 was full of

In the Hot Seat – CostPocket

Thank you for taking our HotSeat, CEO of CostPocket, Martin Sookael. How did the last year turn out for your company? Over 7000

In the Hot Seat – Fermi Energia

Thank you for taking our HotSeat, CEO of Fermi Enegia, Kalev Kallemets. How did the year 2022 turn out for your company? Very positive

Funderbeam and Block Dojo announce partnership

Funderbeam, the global investing and trading platform, and Block Dojo, a global blockchain incubator, are delighted to announce

In the Hot Seat – Helmes

Thank you for taking our Hot Seat Andres Kaljo, Partner and member of the management board at Helmes. Helmes is privately trading

Financial Feminism – Samantha Wood – Wood Mitchell

On this week’s podcast, Oli talks to Samantha Wood, financial planner and wealth manager at Wood Mitchell. Samantha describes

In the Hot Seat – Shroomwell

We welcome Shroomwell CEO Silver Laus to the Hot Seat for a look back at 2022 and forward to 2023. How did the year 2022 turn out

In the Hot Seat – FitSphere

FitSphere CEO and co-founder Karl Vihul takes our hot seat for a look back at 2022 and plans and outlook for 2023. 1. How did the

In the Hot Seat – Tactical Foodpack

Tactical Foodpack CEO Sverre Puustusmaa takes the hot seat for a look back at 2022 and plans and outlook for 2023. 1. How did the

Funderbeam Podcast – Cathy White – CEW Communications

On today’s podcast Oli talks to Cathy White about Venture Capital PR & Communications Cathy discusses her road to working

Funderbeam Podcast – Kristina Pereckaite – Founder of South East Angels

On today’s podcast Oli talks to Founder and Managing Director of South East Angels, Kristina Pereckaite about founding an

In the Hot Seat – Snabb

Thank you for taking our Hot Seat, CEO of Snabb, Kustas Kõiv to take a look back at 2022 as well as discuss the plans and outlook

In the Hot Seat – HUUM

1. How did the year 2022 turn out for your company? We are happy to say that 2022 was successful for HUUM. When we made our annual

Ark2030 & Funderbeam Announce Partnership

Funderbeam and Ark2030 are proud to announce a partnership that will see Funderbeam provide a Private Market for Ark2030’s Investor

In the Hot Seat – Bikeep

1. How did the year 2022 turn out for your company? It turned out to be another great growth year. We increased our total orders

In the Hot Seat – Adact

Adact is currently using Funderbeams Private Syndicate solution, but we hope one day, the shares will be available for trading also

Funderbeam Podcast – Rod Beer, MD of UKBAA

The Past, Present & Future of Angel Investing – Rod Beer of UKBAA On this week’s Funderbeam Podcast, Oli talks to

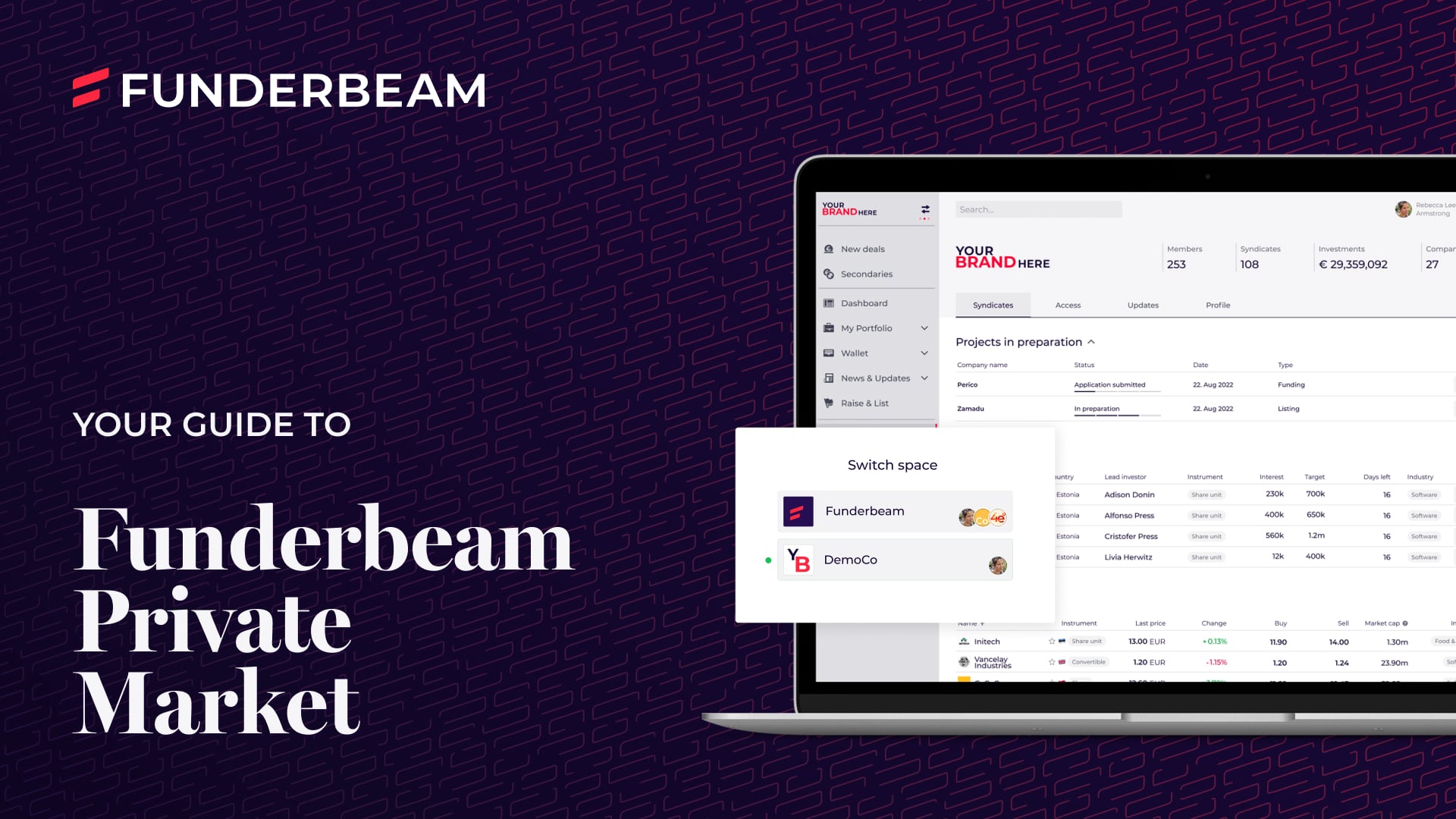

Funderbeam Private Market: A Guide

Your Guide to Funderbeam Private Market A guide to Funderbeam’s Private Market product for Investor Networks. An Introduction

You should ensure you carefully read the Risk Disclosure Statement before deciding to proceed with any investment or transaction, including making a purchase of securities via the Marketplace. Funderbeam has taken steps to ensure that company and securities offering information is clear, fair and not misleading in accordance with its internal verification procedures. Funderbeam does not provide investment advice or any recommendation to invest. Any investment opportunity on this website should not be considered as an offer to the public and is not directed at or offered to anyone to whom it may not be so directed or offered, or located in a jurisdiction where it is unlawful to do so.

This page provides you with an overview of the services provided by different entities belonging to Funderbeam Group. In this page, we generally refer to the group as “Funderbeam”, “we”, “us” or “our”.

It is important to note that funds are raised, investments are made and trade orders are placed through three service provider entities: Funderbeam Markets AS (FBAS) (authorised and regulated by the Estonian Financial Supervision Authority under permit 4.1-1/212), Funderbeam Markets Limited (FML (authorised and regulated by the UK Financial Conduct Authority under FRN 794918), and (for trade orders only) Funderbeam Markets Pte. Ltd., (FB Pte). FBAS and FML are MIFID investment firms.

A Funderbeam client (whether investor or company) is a client of the service provider and under the protection of the requirements of the regulator under which that service provider operates: An EEA client’s service provider is FBAS, a UK/ non-EEA/ non-Singapore client’s service provider is FML, and a Singapore client’s service provider is FB Pte.

The Marketplace is operated as an organised market by Funderbeam Markets Pte. Ltd., in Singapore as a Recognised Market Operator (RMO) under the supervision of the Monetary Authority of Singapore. FBAS and FML are Trading Members of the RMO’s Marketplace. Access to the Marketplace for EEA and non-EEA clients is only provided by and through such clients’ service provider (ie FBAS or FML). The Marketplace does not provide services directly to investors outside Singapore.

With respect to any securities or investments offered by a US domiciled Fundraising Company, by visiting this site you confirm you are not a US resident or US person (as defined in Regulation S of the U.S. Securities Act of 1933) and you understand and agree that you are not acquiring any Investments for the account or benefit of any such US resident or US person. No investment opportunity in a US domiciled Fundraising Company is directed at US persons.